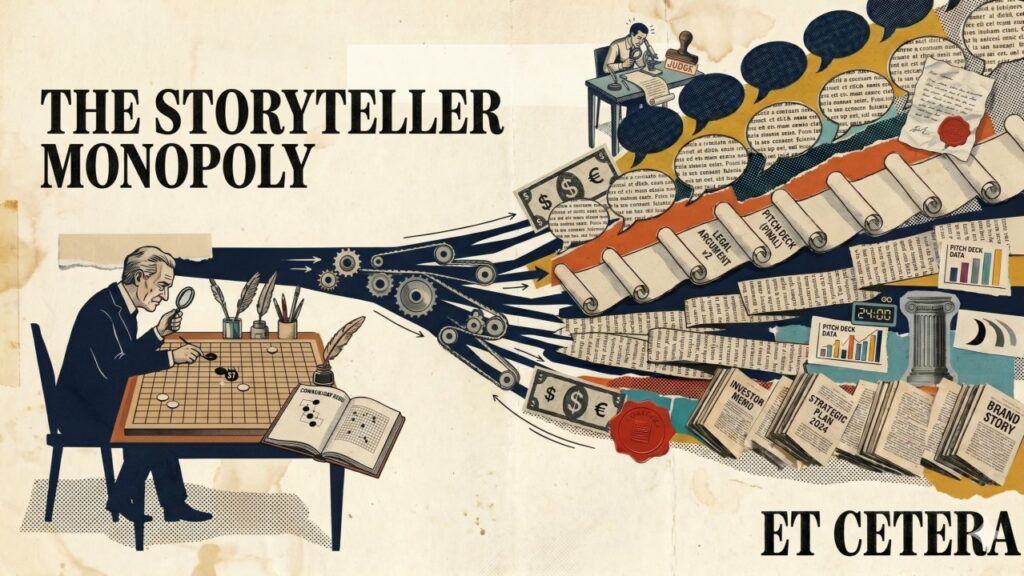

One of the most useful ways I've found to think about AI lately is pretty simple: it's a machine that makes plausible stories cheap.

That sounds smaller than it is. A lot of what we use to coordinate at scale is stories. Valuations are stories. Brands are stories. Strategies, job titles, contracts, even companies. They aren't lies. They're agreements that become real once enough people act as if they are.

For most of history, that storytelling layer was implicit human territory. Machines could calculate, sort, route, optimize. People still wrote the meaning around it: what counts, what matters, where the group is going.

Nobody really named that boundary, because it was just how the world worked.

I'm calling it the Storyteller Monopoly.

What's changed in the last few years isn't only that models got better at writing. It's that they crossed into the coordination layer. A legal argument that gets taken seriously. A pitch deck that earns a meeting. A positioning doc a marketer would actually ship. An investor memo that moves a room.

Classic automation didn't do that. The loom replaced the weaving. The calculator replaced the arithmetic. The spreadsheet replaced the model. In each case, machines took the work and humans kept the meaning.

AI doesn't respect that line.

The clearest way to see this, weirdly, isn't language. It's AlphaGo.

When AlphaGo played Move 37 against Lee Sedol, commentators thought it was a mistake. Nobody plays Go like that. Then it became the move everyone remembered. The deeper lesson wasn't that a machine beat a champion. It was that thousands of years of human play had only explored a narrow slice of the game.

The interesting thing was range, not speed.

Something similar is starting to happen in narrative. AI won't only generate the stories humans would have written, faster and cheaper. It will land on framings, arguments, and combinations humans would never have tried. The monopoly was never just about who got to tell the story. It was about the boundaries of what stories were even thinkable.

Here's the part that actually matters for you and me.

If plausible stories are now cheap, writing a coherent one stops being the rare skill. Judging whether a coherent story maps to reality becomes the rare skill.

A founder can generate ten convincing positioning narratives in an afternoon. A leader can produce three plausible strategy decks before lunch. An analyst can output a credible investor memo on almost any company by Friday. Saying it well used to be hard. Now it's the floor. The harder question is whether there's something underneath that survives a real customer, a real market, a hard week in the field.

That shift hits a lot of jobs harder than it looks. A lot of senior work was secretly storytelling work. Sense-making, framing, packaging. That work doesn't disappear, but its scarcity does. Most teams haven't updated their definition of "good thinking" yet to reflect that.

Skepticism has to get more precise, too. The risk isn't that AI produces obvious nonsense. That's easy to throw away. The risk is that it produces clean, confident, well-structured coherence on things that aren't true. A well-structured due diligence memo looks the same whether or not anyone actually did the diligence. Coherence is persuasive even when it's wrong, and we have very few cultural defenses against it.

Most of our institutions, from courts to capital markets to hiring, were built assuming the storyteller was a human, with human limits on how fast and how convincingly they could spin a narrative. That assumption is quietly going out of date.

Worth keeping that in mind, regardless of what you're building this week.